Q&A about CGM Accuracy

Responses to reader questions and comments about the G6 and G7 accuracy ratings

In my last post titled, “Continuous Glucose Monitors: Does Better Accuracy Mean Better Glycemic Control?,” I presented the results from my having worn both the Dexcom G6 and G7 simultaneously for a month. The net result was that, despite the G7’s purported “improved accuracy” over the G6, my glycemic management was worse. I attributed this to the fact that the G7’s highly erratic readings made it difficult to make in-the-moment decisions on insulin or carbohydrates because it wasn’t clear where my systemic glucose levels were moving, and how fast. With the G6, I could quickly see patterns and respond to them quickly.

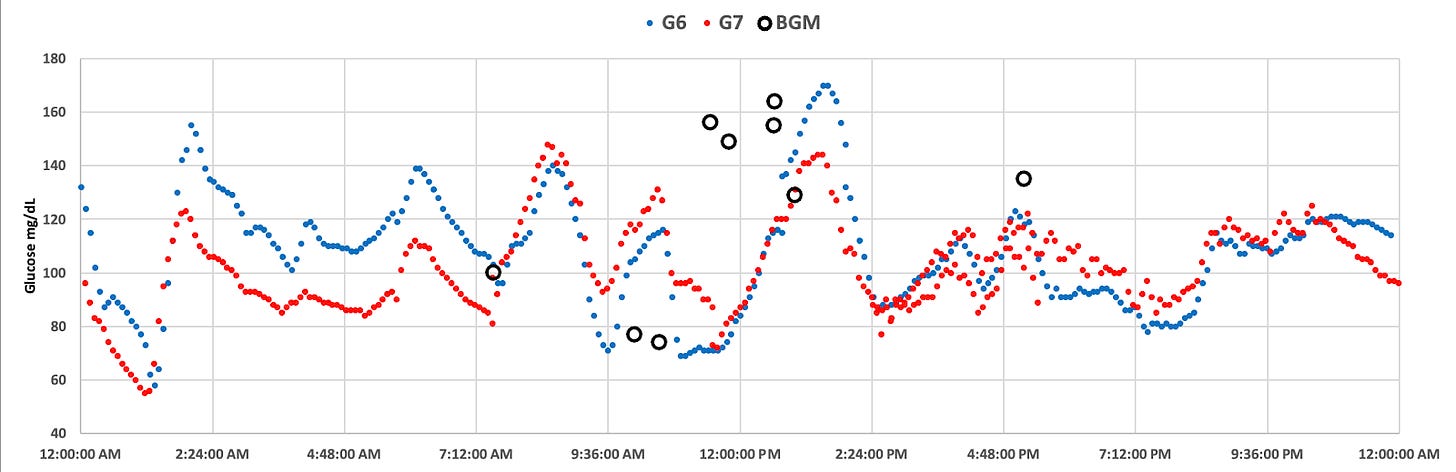

An example day showing the difference between the G6 and G7 is shown in this chart:

The G7 (red) shows more erratic glucose values from the G6 (blue)

The main thesis of the article was that the G6 and G7 brought into bright light a great misconception people have about glucose levels in the body—that one measurement is systematic, much like body temperature, or cholesterol levels. But glucose is not like that in the body. Therefore, the “accuracy” of a reading–whether it’s a CGM or a BGM (blood glucose monitor)--generally does not give actionable information, except for extreme values far outside of the normal range. Glucose is highly volatile within the body, which means that the G7’s “greater accuracy” is better.

In reality, however, the near exact opposite is the case. Greater accuracy can be clinically deceptive, because any given reading does not give reliable information of systemic glucose levels, or trajectory of movement. Looking at G7 glucose patterns shows this very problem.

To measure systemic glucose movements, one must take into account multiple readings and apply a formula using statistical probabilities, which the G6 was very good at. One could see a G6 glucose pattern and rely on its direction and rate of change in order to make good management decisions “in the moment.” Its degree of ‘accuracy’ on individual readings may be different from a single-measurement reference platform, but that was never its original goal, and correctly so.

The G7, by contrast, may pair better to a reference platform on a per-read basis, but would not be able to discern systemic patterns for roughly 90 minutes, long after preemptive action would have been advised.

Now, while all of this is technically factual, the reality is that most diabetics don’t really micromanage their diabetes in such small time intervals, a factor that I discuss at length in my original article. For the majority of diabetics, they may not really see any difference in glucose management between the G6 and G7.

But those who (like me) are in tight glycemic control, the G7 is a step backwards. And, as it turns out, those who aim to use future automated insulin pumps, which is somewhat ironic, because it is rumored that the reason Dexcom moved away from the G6 calculations to the erratic G7 readings was specifically to allow pump manufacturers to discern “raw data” and generate their own (internal) algorithms to forecast glucose trends. Indeed, this was the most common question I received:

How would the G7 perform with a closed-loop system?

I didn’t address closed-loop systems (CLS) because their performance and effectiveness rely on more than just glucose values from a CGM, and that would have been an article on its own. In fact, I have since written that article, “Benefits and Risks of Insulin Pumps and Closed-Loop Delivery Systems.”

Whether you’re a human or an algorithm, optimizing glucose levels relies on knowing systemic glucose movement and the rate of change. While you, the human, are not interested in watching your CGM data religiously, the CLS will. Therefore, for it to do its job well, the CLS algorithm needs to look at these short time windows to provide sufficient insulin when needed, and to stop administering insulin when appropriate.

The goal that pump manufacturers had hoped for is to use the “accurate” G7 data and do their own internal analysis to infer future glucose trends. While admirable, they can’t possibly do that because the way the G6 did it was not simply by averaging subsequent glucose values to make a smooth curve. That analysis was done by factoring in a large amount of proprietary knowledge gained by closely monitoring glucose movements within fluids and incorporating complex physics formulas to more accurately infer each reading from five minutes’ worth of data gathered between readings. This is the reason why G6 sensors had to be coded, because different algorithms would be used based on the manufacturing variability characteristics of the enzymes on each individual sensor.

The G7, by contrast, does none of this—it aimed solely to translate the raw data into a glucose value, which happens to correspond more closely to the external reference platform in order to achieve a desired MARD value. This is a remarkable step backwards for glycemic management, both for pump users and MDI users like myself.

At the top of the list of risks is hyperglycemia, where the G7’s erroneous data causes either a humor or an algorithm to incorrectly calculate a potentially large and dangerous insulin bolus. Once insulin is in, there’s no taking it out. And if you’re not paying close attention to systemic glucose levels—which the G6 was more adept at doing—you’ve going to have a very rough night.

Is the G7 really more accurate? The data suggests otherwise.

I got an email from someone that pointed out a few things that I hadn’t immediately considered.

He began with a question as to whether I correlated my G6 values with lab-based A1c values. The theory being that, if the two paired closely, then it would suggest the G7’s difference is because 1) my G6 and the A1c tests are coincidentally inaccurate in exactly the same way, or 2) the G7’s accuracy is overstated.

There are several separate things going on. First, the HbA1c test, which measures the average amount of glucose attached to red blood cells over a 90-day period, is itself a complicated topic, which I cover in detail in “HbA1c Tests and T1D: The Good, The Bad and the Ugly.” As it pertains to this question, one’s A1c level and their actual average glucose levels may not correlate due to a variety of factors, but in my case, they have always correlated precisely to what the G6 had indicated.

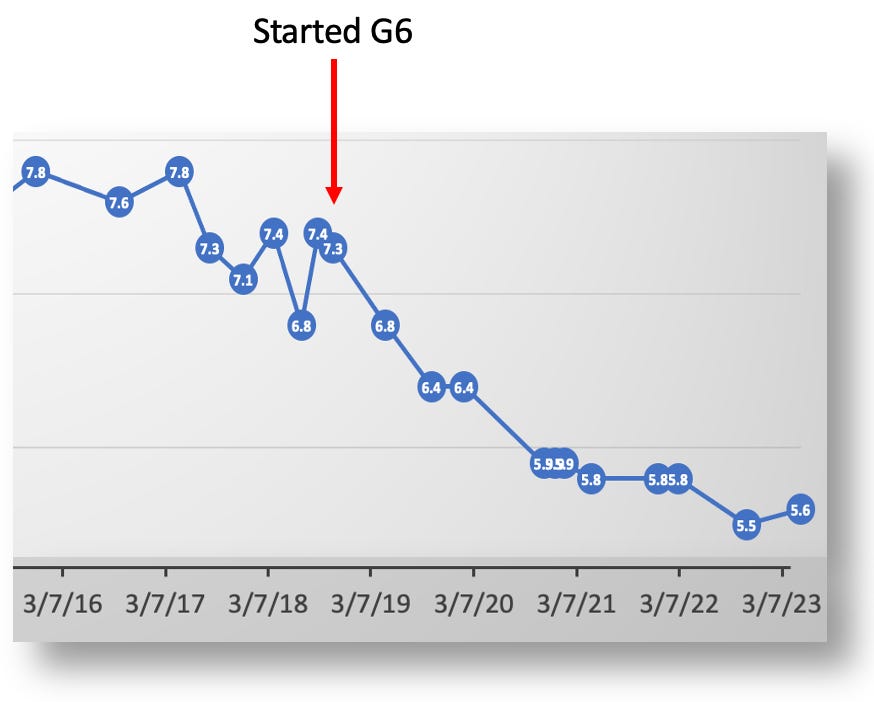

When I started using the G6 in 2018, its predicted values both matched my A1c lab test, but allowed me to reliably adjust my diet, exercise and insulin in ways that vastly improved my glycemic control. By late 2020, my A1c dropped to <6%, and now into 2023, I’m between 5.5% and 5.8%.

My A1c levels after 2016 dropped with the help of data from the Dexcom G6.

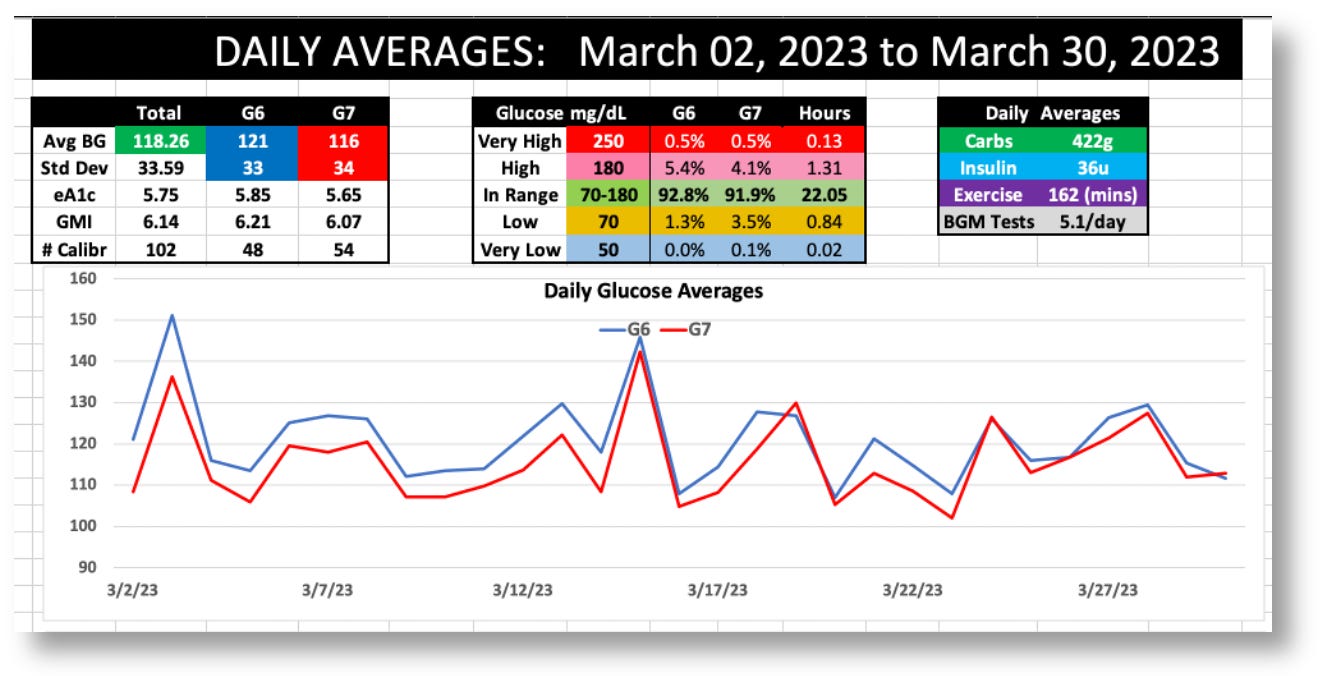

These lab-based HbA1c tests were performed at Quest, Labcorp, and UCSF medical center, and each result correlated 100% to the averages reported by my G6. And yet, in my experiment wearing both the G6 and G7, the G7’s readings were 4.3% lower than the G6, as shown in this dashboard from my data:

Dashboard stats for the G6 and G7 during March 2023

The G6 averaged 121 mg/dL, versus the G7’s 116, and the standard deviations (SD) were 33 vs. 34, respectively.

Because I didn’t run this experiment for 90 days, I cannot get an A1c test and confirm whether the results would correlate to the G6 or G7. But it does remain an outstanding question: If over 25 years of A1c tests were consistently off by precisely the same amount that the G6 was off, then that would be remarkable. Since the G7’s readings are 6.4% lower than the G6 readings, I’m going to presume that the G7 is off.

Whether or not this is the case, it’s an opportunity to raise, once again, the definition of the word, “accurate.” Remember that Dexcom’s clinical trial only attempted to demonstrate that the G7 reported individual readings compared to a reference platform: the YSI 2300 Stat Plus glucose analyzer.

Despite the fact that the G7 matched the YSI values with an average MARD rating of ~8.8% (compared to the G6’s 9%), those are relative differences. The absolute difference between these two sensors is only 2.27%. And even then, that’s the best MARD the G7 was able to achieve. As I stated in my first article, Dexcom’s G7 clinical trial showed a very wide range of MARD values, which got progressively worse as glucose levels rose, maxing out at over 30%. That’s a pretty broad range. In other words, “accuracy” is not only very hard to really get right, but its own variability suggests that looking too deeply into these numbers is largely futile. There’s just too much noise in the signal.

Nevertheless, it still provokes the question: If the G7 is similar enough to the G6, why is it so consistently lower for each day of the month? I can’t answer that directly, but I can say that, whatever the reason, it speaks even more persuasively about the value of tracking systemic glucose movements, not individual readings. Accuracy, even if achieved at 100%, is not a useful tool for managing T1D.

Why doesn’t Dexcom do “data smoothing,” similar to what it did for the G6 to reduce the erratic readings?

Dexcom stopped data smoothing in the G6 with v.1.9 of the app, and the kind of smoothing they were doing was extremely superficial. (Look at the graphs Dexcom uses in their announcement–they remove little bumps here and there.) The G6 data today is still considerably smoother than the G7 because of how the algorithm interpolates data to show “systemic glucose movements” (the very technique needed for optimal glucose control). Again, I discuss that in my original article in the section titled, “CGMs and the ‘precision decision’”.

As to why Dexcom is choosing this route is another matter entirely, so I defer back to my original article.

The G7 sensor wire is different from the G6, so wouldn’t that affect the readings?

Many pointed me to the fact that the G7 physically samples interstitial fluids differently than the G6, which they attributed as being a design flaw that wasn’t apparent till the G7 was released to the broader public. This article from diabettech.com (which I’ve seen echoed in other forums) explains the unintentional design error this way:

“In the G6, and previous Dexcom devices, the sensor is at an angle into the body tissue. In the G7, it is vertical. I assume that this somehow makes it less stable and more prone to move within the scar sheath that it makes on entry. But that is only speculation. Since then I’ve heard that others have discussed this issue directly with Dexcom, and had a similar opinion in response.”

Having worked in medical diagnostics, I know that such “mistakes” don’t happen. It is impossible to go through the incredible amount of work, time, expense, and trials, only to finally come out with a product that shows such obviously recognizable data problems, that someone wouldn’t have seen such problems much earlier (in the prototype stage).

There may well have been good reasons for this new method of sensor wire insertion, but so far as we can see, it’s unrelated to the volatile glucose values.

The G7’s weak battery can explain a lot of problems.

Another theory proffered by someone who emailed me directly (and happens to be an electrical engineer in the diagnostics industry) is that the G7’s battery is just too weak.

As a general principle, when battery levels are low, their voltage is more volatile, which affects the delivery and receipt of electronic signals between the battery and the enzymes on that sensor wire. Therefore, the erratic readings could be due to erratic voltages. He supports his thesis with two separate but highly correlated events.

First, it’s well established that the G7’s bluetooth connectivity is very weak, which has been confirmed by many, including myself. When my G7 is on my abdomen and I put my phone in my back pocket, I get a “signal lost” alert. Similarly, if my G7 is on the back of my arms and I put the phone in my front pocket, I also get a signal loss alert. Bluetooth connectivity range is directly proportional to its power, so if the battery is so weak that it can’t even connect around your body, as my contact tells me, surely that will produce erratic voltages, which will produce unreliable signals.

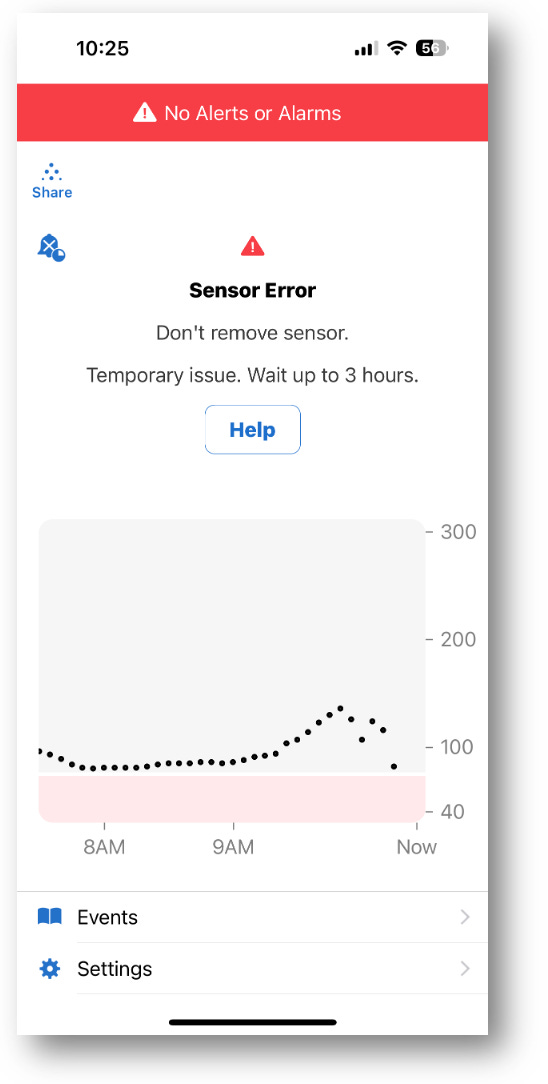

He also says this same phenomenon can be seen in the G6 if its transmitter battery happens to be low, which can happen when it nears the end of its three month window. Here’s a recent example of mine. (The G6’s error message is different from the G7.)

A weak G6 transmitter battery can produce similar erratic readings as the G7.

My contact told me he disassembled the G7 and the G6 transmitter and tested each’s battery voltages and confirmed that the G7’s is considerably weaker. However, he strenuously emphasized that, just because the battery is weaker does not itself prove that the G7’s erratic readings are caused by it. He has no idea how much voltage is actually required for the G7 system to work–that’s proprietary information. He only knows it’s weaker than the G6, and that the G7’s bluetooth connectivity is poor.

Despite how tempted I am to believe this explanation for the erratic readings, it raises more questions than answers. First, erratic data and bluetooth connectivity should have raised a red flag with someone in quality control much earlier in the manufacturing and distribution process. If it had, it would have triggered a review of the supply chain (battery suppliers) among other things, and the problem would have been corrected, or the product delayed. Did that happen? If so, what was the outcome?

My contact speculated that the G7 had been delayed a number of times due to the pandemic, which hit electronic supply chain problems pretty badly. The production of cars, appliances, and anything else that used microchips were delayed, and sub-quality replacements were circulating worldwide. It could have been that inferior batteries were eventually given to Dexcom by the time they finally went to production. But again, this is highly speculative, despite the fact that it’s happened with many other companies.

As logical and reasonable as this sounds, it’s just too far-fetched for my sensibilities.

If the problem was known, and the company decided to source its batteries from another supplier, they would have been required by the FDA to document this change and put the product back into the review process. Did they? Maybe they did. By law, if the physical parts changed in any way between the documented manufacturing process the company submitted to the FDA and the product that ultimately came to market, the company would be heavily fined and the FDA approval withdrawn (not to mention the reputational damage). But if they did, and this changed the G7 readings, that would have set the company back even further.

For all these reasons, whatever the situation is with the G7’s battery, it was–and always has been–a known factor that the company is–and always has been–aware of. Whatever the G7’s faults (or, if you like, “advantages”), the sensor as we see it today is intentional.

All that said, Dexcom is well aware of the complaints about the weak bluetooth signal, and it’s hard to believe that can be solved in any other way than a stronger battery. That will probably require a new FDA application, but the company will likely demonstrate that the new battery is not going to affect the G7’s data, which will streamline the process to make it quick, easy and painless. Now, if it happens that the stronger battery data will change the data as a result, the company will be in a bit of a pickle, and they’re going to have to figure out how to deal with that.

Summary

My article comparing the G6 and G7 was intended to present a greater concept, that glucose in the body is highly volatile, and not evenly distributed, therefore, making it hard to measure at a systemic level. Individual readings, regardless of accuracy, have very little useful purpose. What you really want to see are glucose trends, as those are what allow you to track systemic movements of glucose changes, which leads to making better management decisions. The G6 is very good at showing trends, whereas the G7 requires far too many consecutive readings before useful trends can be identified.

If Dexcom is going to discontinue the G6, then I certainly hope they make G6-like data charting available as an option in the G7.

Your article comparing the G6 to G7 was extremely helpful for my son. He was trying to explain the issues he was having with the g7 since transitioning from G6 in Jan but it was hard to understand. Your article essentially spelled out the exact issue he was trying to describe in a very clear way and we switched back to the G6 immediately. He has always managed to time-in-range and he was really frustrated with what the G7 was doing to those ranges.